FastAPI Tutorial Part 17: Performance and Caching

Optimize FastAPI performance with caching, async operations, connection pooling, and profiling. Build blazing-fast APIs.

Moshiour Rahman

Advertisement

Introduction

Performance in modern web APIs often boils down to a trade-off between latency (how fast one request returns) and throughput (how many requests per second you can handle).

In this tutorial, we will optimize FastAPI using:

- Redis Caching: Reducing database load.

- Connection Pooling: Reuse expensive DB connections.

- Async Optimizations: Handling concurrency properly.

Redis Caching

Caching is the most effective way to improve read performance. By storing expensive query results in fast implementations like Redis, you can serve data in milliseconds.

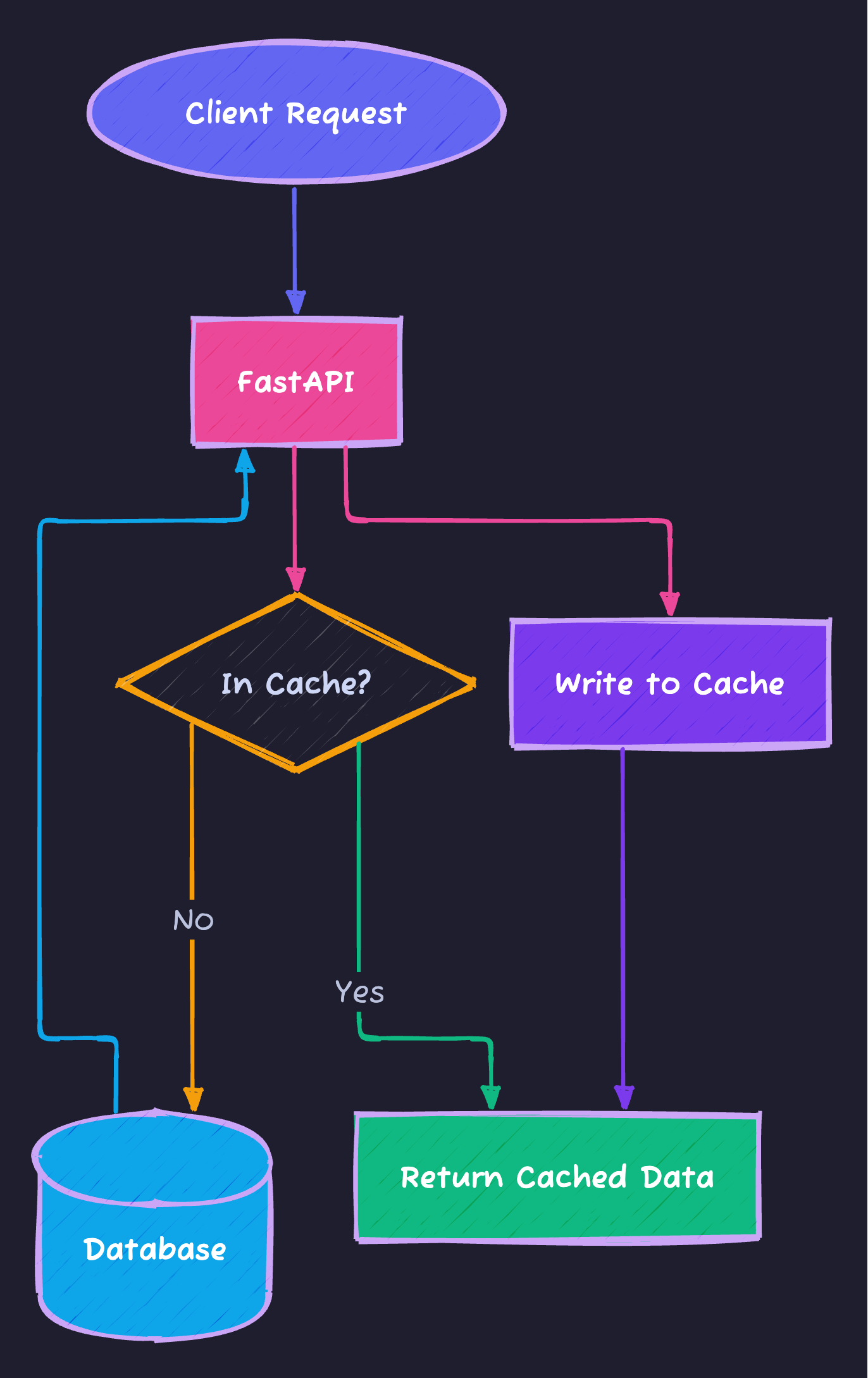

The Cache-Aside Pattern

We typically use the Cache-Aside (or Lazy Loading) pattern:

Decorator Implementation

Here is a reusable decorator that implements the logic above:

import redis

from functools import wraps

import json

# In production, use environment variables for host/port

redis_client = redis.Redis(host="localhost", port=6379, db=0)

def cache(ttl: int = 300):

def decorator(func):

@wraps(func)

async def wrapper(*args, **kwargs):

# Generate a unique key based on arguments

key = f"{func.__name__}:{args}:{kwargs}"

# 1. Check Cache

cached = redis_client.get(key)

if cached:

return json.loads(cached)

# 2. Miss? Call the actual function (DB call)

result = await func(*args, **kwargs)

# 3. Write to Cache with Expiration (TTL)

redis_client.setex(key, ttl, json.dumps(result))

return result

return wrapper

return decorator

@app.get("/products/{product_id}")

@cache(ttl=60)

async def get_product(product_id: int):

# This slow DB call only runs if cache is empty

return await fetch_product_from_db(product_id)Cache Dependency

from fastapi import Depends

import aioredis

async def get_redis():

redis = await aioredis.from_url("redis://localhost")

try:

yield redis

finally:

await redis.close()

@app.get("/data/{key}")

async def get_cached_data(key: str, redis = Depends(get_redis)):

cached = await redis.get(key)

if cached:

return {"data": cached.decode(), "cached": True}

data = await fetch_data(key)

await redis.setex(key, 300, data)

return {"data": data, "cached": False}Database Optimization

The database is usually the bottleneck. Two strategies help here: Connection Pooling and Async Drivers.

Connection Pooling

Creating a new DB connection is expensive (TCP handshake, auth, etc.). A pool keeps a set of open connections ready for reuse.

from sqlalchemy import create_engine

from sqlalchemy.pool import QueuePool

engine = create_engine(

DATABASE_URL,

poolclass=QueuePool,

pool_size=10, # Keep 10 connections open

max_overflow=20, # Burst up to 30 total during load

pool_pre_ping=True # Check if connection is alive before using

)Async Database

from sqlalchemy.ext.asyncio import create_async_engine, AsyncSession

engine = create_async_engine(

"postgresql+asyncpg://user:pass@localhost/db",

pool_size=10,

max_overflow=20

)Query Optimization

from sqlalchemy.orm import selectinload

# Eager loading to avoid N+1

@app.get("/users")

async def get_users(db: AsyncSession = Depends(get_db)):

result = await db.execute(

select(User).options(selectinload(User.posts))

)

return result.scalars().all()Response Caching Headers

from fastapi import Response

@app.get("/static-data")

async def get_static_data(response: Response):

response.headers["Cache-Control"] = "public, max-age=3600"

return {"data": "static content"}Async Best Practices

FastAPI is built on AnyIO and asyncio. To get maximum performance, your I/O-bound operations (DB calls, external APIs) must be non-blocking.

Blocking vs Non-Blocking I/O

sequenceDiagram

participant Worker

participant Ext as External API

note right of Worker: Sync (Blocking)

Worker->>Ext: Request 1

Ext-->>Worker: Wait...

Ext-->>Worker: Response 1

Worker->>Ext: Request 2

Ext-->>Worker: Response 2

note right of Worker: Async (Concurrent)

Worker->>Ext: Request 1

Worker->>Ext: Request 2

Ext-->>Worker: Response 1

Ext-->>Worker: Response 2

Parallel Execution with asyncio.gather

If you need to call multiple independent APIs, run them in parallel:

import asyncio

import httpx

# Parallel external calls

@app.get("/dashboard")

async def get_dashboard():

async with httpx.AsyncClient() as client:

# Start all requests simultaneously

tasks = [

client.get("https://api1.com/data"),

client.get("https://api2.com/data"),

client.get("https://api3.com/data")

]

# Wait for all to complete

results = await asyncio.gather(*tasks)

return {"data": [r.json() for r in results]}Performance Monitoring

import time

from fastapi import Request

@app.middleware("http")

async def timing_middleware(request: Request, call_next):

start = time.perf_counter()

response = await call_next(request)

duration = time.perf_counter() - start

response.headers["X-Response-Time"] = f"{duration:.4f}s"

return responseSummary

| Technique | Impact |

|---|---|

| Redis caching | Reduce DB load |

| Connection pooling | Efficient connections |

| Async operations | Better concurrency |

| Eager loading | Avoid N+1 queries |

Next Steps

In Part 18, we’ll explore API Security Best Practices - securing your FastAPI application.

Series Navigation:

- Part 1-16: Previous parts

- Part 17: Performance & Caching (You are here)

- Part 18: Security Best Practices

Advertisement

Moshiour Rahman

Software Architect & AI Engineer

Enterprise software architect with deep expertise in financial systems, distributed architecture, and AI-powered applications. Building large-scale systems at Fortune 500 companies. Specializing in LLM orchestration, multi-agent systems, and cloud-native solutions. I share battle-tested patterns from real enterprise projects.

Related Articles

FastAPI Tutorial Part 20: Building a Production-Ready API

Build a complete production-ready FastAPI application. Combine all concepts into a real-world e-commerce API with authentication, database, and deployment.

PythonFastAPI Tutorial Part 19: OpenAPI and API Documentation

Create professional API documentation with FastAPI. Learn OpenAPI customization, Swagger UI, ReDoc, and documentation best practices.

PythonFastAPI Tutorial Part 16: Docker and Deployment

Deploy FastAPI applications to production. Learn Docker containerization, Docker Compose, cloud deployment, and production best practices.

Comments

Comments are powered by GitHub Discussions.

Configure Giscus at giscus.app to enable comments.