API Design Part 7: Resilience & Observability

Master circuit breakers, retry patterns, and the three pillars of observability. Build fault-tolerant APIs with proper metrics, logging, and distributed tracing.

Moshiour Rahman

Advertisement

API Design Mastery Series

This is Part 7 of our comprehensive API Design series.

| Part | Topic | Level |

|---|---|---|

| 1 | HTTP & REST Fundamentals | Beginner |

| 2 | Security & Authentication | Beginner |

| 3 | Rate Limiting & Pagination | Intermediate |

| 4 | Versioning & Idempotency | Intermediate |

| 5 | Caching Strategies | Intermediate |

| 6 | GraphQL & gRPC | Intermediate |

| 7 | Resilience & Observability | Advanced |

| 8 | Production Mastery | Advanced |

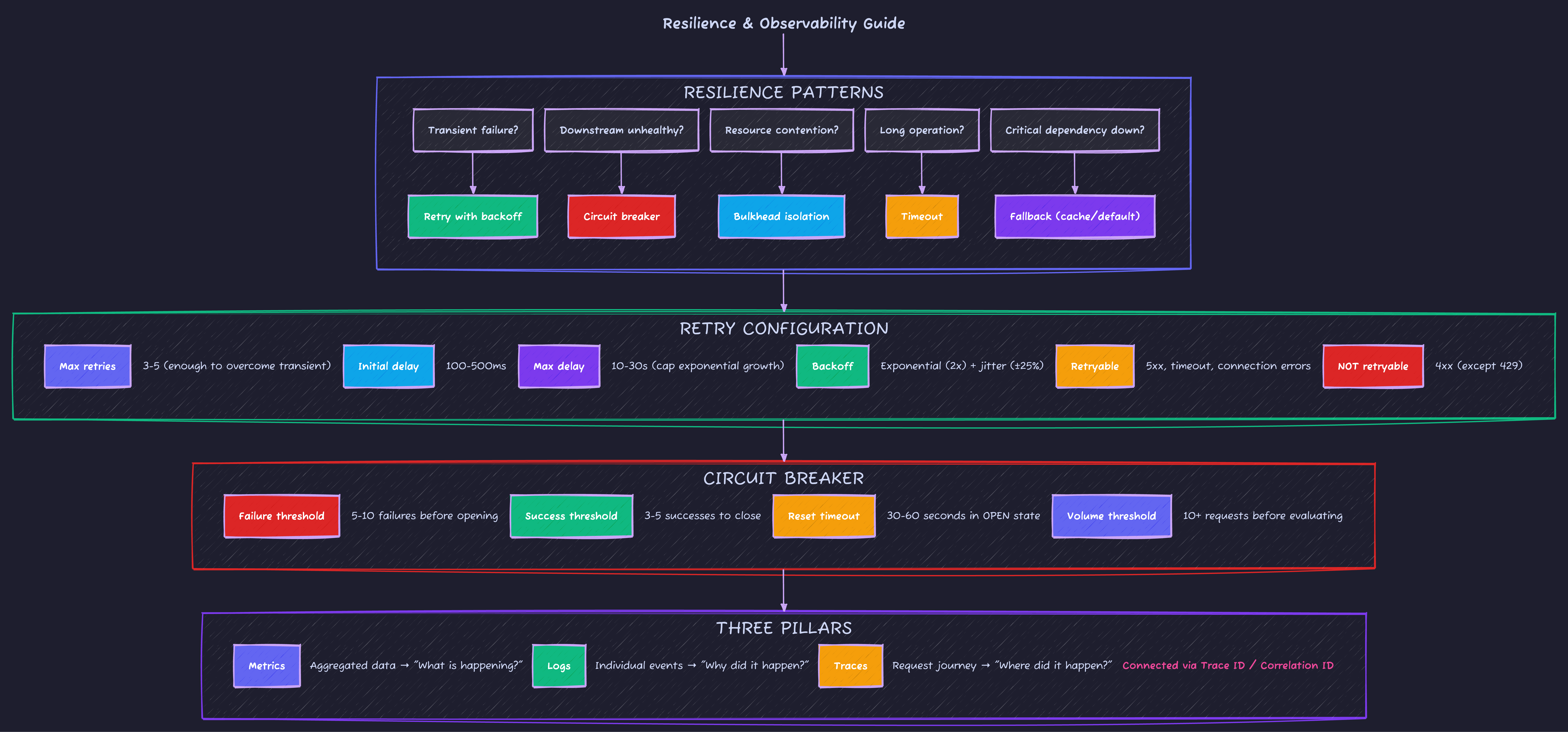

Resilience Patterns

Circuit Breaker State Machine

The circuit breaker pattern prevents cascading failures by failing fast when a service is unhealthy.

| State | Behavior | Transition |

|---|---|---|

| CLOSED | Normal operation, track failures | Opens when failure threshold reached |

| OPEN | Reject all requests immediately | Moves to HALF-OPEN after timeout |

| HALF-OPEN | Allow limited requests to test | Closes on success, re-opens on failure |

Circuit Breaker Implementation

// circuit-breaker.ts - Production circuit breaker

enum CircuitState {

CLOSED = 'CLOSED', // Normal operation

OPEN = 'OPEN', // Failing, reject requests

HALF_OPEN = 'HALF_OPEN' // Testing if service recovered

}

interface CircuitBreakerConfig {

failureThreshold: number; // Failures before opening

successThreshold: number; // Successes in half-open to close

timeout: number; // Time in open state before half-open

volumeThreshold: number; // Minimum requests before evaluating

errorFilter?: (error: Error) => boolean; // Which errors count

}

export class CircuitBreaker {

private state: CircuitState = CircuitState.CLOSED;

private failures = 0;

private successes = 0;

private stateChangedAt = Date.now();

private halfOpenSuccesses = 0;

constructor(

private name: string,

private config: CircuitBreakerConfig

) {}

async execute<T>(fn: () => Promise<T>): Promise<T> {

// Check if we should transition from OPEN to HALF_OPEN

if (this.state === CircuitState.OPEN) {

if (Date.now() - this.stateChangedAt >= this.config.timeout) {

this.transitionTo(CircuitState.HALF_OPEN);

} else {

throw new CircuitOpenError(this.name, this.getRemainingTimeout());

}

}

try {

const result = await fn();

this.onSuccess();

return result;

} catch (error) {

if (error instanceof Error) {

this.onFailure(error);

}

throw error;

}

}

private onSuccess(): void {

this.successes++;

if (this.state === CircuitState.HALF_OPEN) {

this.halfOpenSuccesses++;

if (this.halfOpenSuccesses >= this.config.successThreshold) {

this.transitionTo(CircuitState.CLOSED);

}

} else if (this.state === CircuitState.CLOSED) {

this.failures = 0;

}

}

private onFailure(error: Error): void {

if (this.config.errorFilter && !this.config.errorFilter(error)) {

return;

}

this.failures++;

if (this.state === CircuitState.HALF_OPEN) {

this.transitionTo(CircuitState.OPEN);

} else if (this.state === CircuitState.CLOSED) {

const totalRequests = this.failures + this.successes;

if (

totalRequests >= this.config.volumeThreshold &&

this.failures >= this.config.failureThreshold

) {

this.transitionTo(CircuitState.OPEN);

}

}

}

private transitionTo(newState: CircuitState): void {

console.log(`Circuit ${this.name}: ${this.state} -> ${newState}`);

this.state = newState;

this.stateChangedAt = Date.now();

if (newState === CircuitState.CLOSED) {

this.failures = 0;

this.successes = 0;

this.halfOpenSuccesses = 0;

} else if (newState === CircuitState.HALF_OPEN) {

this.halfOpenSuccesses = 0;

}

}

private getRemainingTimeout(): number {

return Math.max(0, this.config.timeout - (Date.now() - this.stateChangedAt));

}

}

class CircuitOpenError extends Error {

constructor(public circuitName: string, public retryAfter: number) {

super(`Circuit ${circuitName} is open. Retry after ${retryAfter}ms`);

this.name = 'CircuitOpenError';

}

}Retry with Exponential Backoff

// retry.ts - Sophisticated retry logic

interface RetryConfig {

maxRetries: number;

initialDelay: number; // Base delay in ms

maxDelay: number; // Cap on delay

backoffMultiplier: number; // Typically 2

jitter: boolean; // Add randomness to prevent thundering herd

retryableErrors?: (error: Error) => boolean;

}

export async function withRetry<T>(

fn: () => Promise<T>,

config: Partial<RetryConfig> = {}

): Promise<T> {

const finalConfig = {

maxRetries: 3,

initialDelay: 100,

maxDelay: 10000,

backoffMultiplier: 2,

jitter: true,

...config

};

let lastError: Error | undefined;

for (let attempt = 0; attempt <= finalConfig.maxRetries; attempt++) {

try {

return await fn();

} catch (error) {

lastError = error as Error;

if (finalConfig.retryableErrors && !finalConfig.retryableErrors(lastError)) {

throw error;

}

if (attempt < finalConfig.maxRetries) {

const delay = calculateDelay(attempt, finalConfig);

console.log(`Retry ${attempt + 1}/${finalConfig.maxRetries} after ${delay}ms`);

await sleep(delay);

}

}

}

throw lastError;

}

function calculateDelay(attempt: number, config: RetryConfig): number {

let delay = config.initialDelay * Math.pow(config.backoffMultiplier, attempt);

delay = Math.min(delay, config.maxDelay);

// Add jitter (±25%) to prevent thundering herd

if (config.jitter) {

const jitterRange = delay * 0.25;

delay = delay - jitterRange + Math.random() * jitterRange * 2;

}

return Math.floor(delay);

}

// Utility to check if errors are retryable

export function isRetryableError(error: Error): boolean {

if (error.message.includes('ECONNREFUSED')) return true;

if (error.message.includes('ETIMEDOUT')) return true;

if (error.message.includes('ENOTFOUND')) return true;

// HTTP errors that are typically transient

if ('status' in error) {

return [408, 429, 500, 502, 503, 504].includes((error as any).status);

}

return false;

}Observability: The Three Pillars

| Pillar | What It Captures | Questions It Answers |

|---|---|---|

| Metrics | Aggregated numeric data (counters, gauges, histograms) | “What is the current state?” “Is it degraded?” |

| Logs | Individual events, structured JSON | ”Why did this request fail?” “What happened?” |

| Traces | Request journey across services | ”Where did time go?” “Which service failed?” |

All three are connected via Correlation ID (Trace ID) for end-to-end visibility.

Essential API Metrics

| Metric | Type | Description |

|---|---|---|

http_requests_total | Counter | Total requests by method, path, status |

http_request_duration_seconds | Histogram | Response time distribution |

http_requests_in_flight | Gauge | Current concurrent requests |

circuit_breaker_state | Gauge | Current circuit state (0=closed, 1=open) |

cache_hits_total | Counter | Cache hit/miss ratio |

db_connections_active | Gauge | Database connection pool usage |

Structured Logging

// logger.ts - Production logging setup

interface LogContext {

traceId: string;

spanId?: string;

userId?: string;

requestId: string;

}

interface LogEntry {

timestamp: string;

level: 'debug' | 'info' | 'warn' | 'error';

message: string;

context: LogContext;

duration_ms?: number;

error?: {

name: string;

message: string;

stack?: string;

};

[key: string]: unknown;

}

export function createLogger(baseContext: Partial<LogContext>) {

return {

info: (message: string, data?: Record<string, unknown>) =>

log('info', message, { ...baseContext, ...data }),

warn: (message: string, data?: Record<string, unknown>) =>

log('warn', message, { ...baseContext, ...data }),

error: (message: string, error?: Error, data?: Record<string, unknown>) =>

log('error', message, {

...baseContext,

...data,

error: error ? {

name: error.name,

message: error.message,

stack: error.stack

} : undefined

})

};

}

function log(level: LogEntry['level'], message: string, data: Record<string, unknown>) {

const entry: LogEntry = {

timestamp: new Date().toISOString(),

level,

message,

context: {

traceId: data.traceId as string || 'unknown',

requestId: data.requestId as string || 'unknown',

...data

}

};

console.log(JSON.stringify(entry));

}SLA/SLO Monitoring

| SLI (Indicator) | SLO (Objective) | Alert Threshold |

|---|---|---|

| Availability | 99.9% uptime | < 99.5% |

| Latency p50 | < 100ms | > 150ms |

| Latency p99 | < 500ms | > 750ms |

| Error rate | < 0.1% | > 0.5% |

Interview Question: “How do you debug a slow API endpoint in production?”

Strong Answer: “I follow a systematic approach using the observability pillars:

-

Metrics first: Check if it’s actually slow (p99 latency), how widespread (error rate, request volume), and when it started (time-series graphs).

-

Traces second: Find slow requests via trace sampling, identify which service/operation is the bottleneck (database? external API? CPU-bound?).

-

Logs last: Once I know the problematic service, search logs filtered by trace ID to see the exact sequence of events.

-

Common culprits: N+1 queries (check DB query count), missing indexes (slow query logs), cold caches (cache hit rate), resource exhaustion (connection pools, memory).

-

Reproduce and fix: Use the trace ID to replay the request in staging, profile the code path, implement fix, verify with canary deployment.”

Health Check Endpoints

Every production API needs health checks for load balancers and orchestration:

// health-checks.ts - Comprehensive health endpoints

interface HealthStatus {

status: 'healthy' | 'degraded' | 'unhealthy';

timestamp: string;

version: string;

uptime: number;

checks: Record<string, ComponentHealth>;

}

interface ComponentHealth {

status: 'pass' | 'warn' | 'fail';

latency_ms?: number;

message?: string;

}

// /health/live - Is the process running? (for Kubernetes liveness)

app.get('/health/live', (req, res) => {

res.status(200).json({ status: 'ok' });

});

// /health/ready - Can it accept traffic? (for Kubernetes readiness)

app.get('/health/ready', async (req, res) => {

const checks = await Promise.all([

checkDatabase(),

checkRedis(),

checkExternalAPI(),

]);

const allPassing = checks.every(c => c.status === 'pass');

res.status(allPassing ? 200 : 503).json({

status: allPassing ? 'healthy' : 'unhealthy',

checks: Object.fromEntries(checks.map(c => [c.name, c])),

});

});

// /health/detailed - Full diagnostics (protect in production)

app.get('/health/detailed', authMiddleware, async (req, res) => {

const status: HealthStatus = {

status: 'healthy',

timestamp: new Date().toISOString(),

version: process.env.APP_VERSION || 'unknown',

uptime: process.uptime(),

checks: {

database: await checkDatabase(),

redis: await checkRedis(),

memory: checkMemory(),

disk: await checkDisk(),

},

};

res.json(status);

});

async function checkDatabase(): Promise<ComponentHealth> {

const start = Date.now();

try {

await db.$queryRaw`SELECT 1`;

return { status: 'pass', latency_ms: Date.now() - start };

} catch (e) {

return { status: 'fail', message: e.message };

}

}| Endpoint | Purpose | Auth | Response Time |

|---|---|---|---|

/health/live | Process alive | None | <10ms |

/health/ready | Ready for traffic | None | <500ms |

/health/detailed | Full diagnostics | Required | <2s |

Timeout Guidelines

| Operation | Recommended Timeout | Reasoning |

|---|---|---|

| Database query | 5-30s | Depends on complexity |

| Redis cache | 100-500ms | Should be fast |

| External API | 3-10s | Varies by provider |

| File upload | 60-300s | Large files need time |

| HTTP client default | 30s | Reasonable default |

| Circuit breaker reset | 30-60s | Time to recover |

| Health check | 5s | Don’t wait forever |

// timeout-patterns.ts - Timeout handling

// Promise with timeout wrapper

export function withTimeout<T>(

promise: Promise<T>,

ms: number,

message = 'Operation timed out'

): Promise<T> {

return Promise.race([

promise,

new Promise<never>((_, reject) =>

setTimeout(() => reject(new TimeoutError(message)), ms)

),

]);

}

// Usage

const user = await withTimeout(

fetchUserFromDatabase(id),

5000,

'Database query timed out'

);Common Resilience Mistakes

| Mistake | Problem | Fix |

|---|---|---|

| No timeouts | Requests hang forever | Always set timeouts |

| Retry without backoff | Thundering herd | Exponential backoff + jitter |

| Retry on 4xx errors | Wastes resources | Only retry 5xx and network errors |

| Circuit breaker per-request | No protection | Share breaker across requests |

| No fallback | Cascade failures | Return cached/default data |

| Ignoring partial failures | Silent degradation | Log and alert on failures |

Observability Common Mistakes

| Mistake | Problem | Fix |

|---|---|---|

| Logging sensitive data | Security/compliance violation | Redact PII, passwords, tokens |

| High-cardinality labels | Prometheus memory explosion | Use bounded label values |

| No request IDs | Can’t correlate logs | Generate and propagate trace ID |

| Log level always DEBUG | Noise, storage costs | Use appropriate levels |

| No alerting on SLOs | Reactive incident response | Alert before users notice |

| Sampling traces at 100% | Storage/cost explosion | Sample 1-10% in production |

Resilience & Observability Quick Reference

What’s Next?

Now that you understand resilience and observability, Part 8: Production Mastery covers interview questions, debugging scenarios, and advanced patterns.

Advertisement

Moshiour Rahman

Software Architect & AI Engineer

Enterprise software architect with deep expertise in financial systems, distributed architecture, and AI-powered applications. Building large-scale systems at Fortune 500 companies. Specializing in LLM orchestration, multi-agent systems, and cloud-native solutions. I share battle-tested patterns from real enterprise projects.

Related Articles

API Design Part 5: Caching Strategies

Master multi-layer caching architecture, HTTP cache headers, ETags, and cache invalidation patterns. Build fast, scalable APIs with proper caching.

System DesignAPI Design Part 6: GraphQL & gRPC

Master modern API protocols beyond REST. Learn when to use GraphQL for flexible queries, gRPC for high-performance microservices, and how to implement both in production.

System DesignAPI Design Part 1: HTTP & REST Fundamentals

Master HTTP methods, status codes, and REST maturity model. The foundation every API developer needs - from GET/POST basics to idempotency and proper status code selection.

Comments

Comments are powered by GitHub Discussions.

Configure Giscus at giscus.app to enable comments.